|

The datasheet discussing in more detail the construction, usage, and limitation of the dataset can be found here. The dataset without the duplicates filtered out is also available here. The deduplication script is available here.

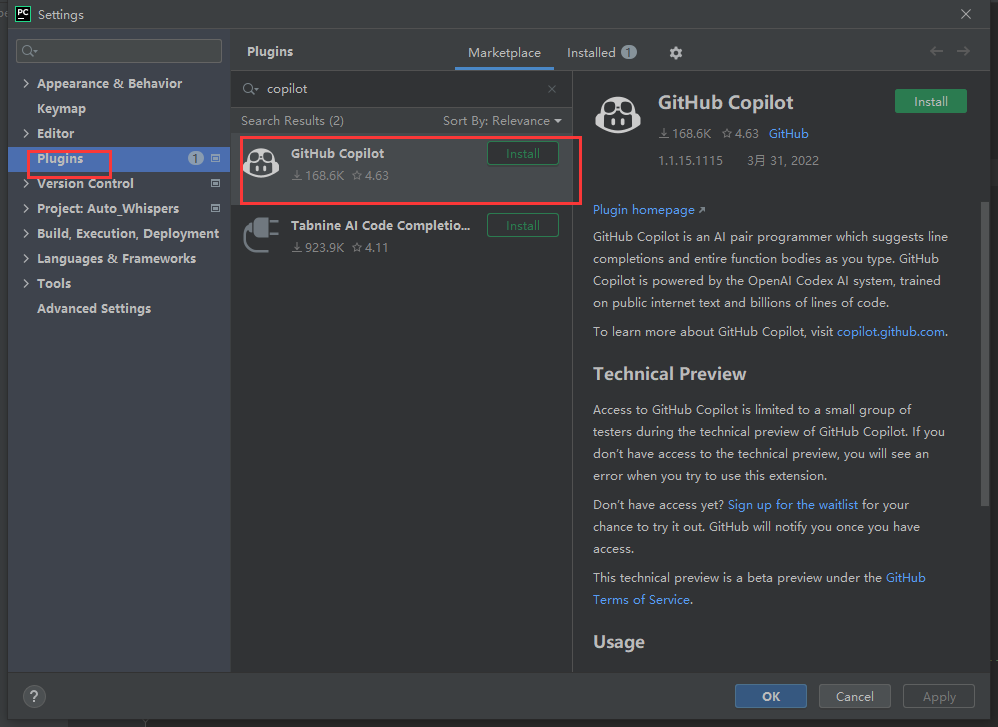

Filtering is performed by regexing each file in each repository to obtain a list of "variables" (the tokens which only contain alphanumeric characters) and then filtering out any files which contain the same sequence of "variables. The repositories are then filtered for duplicate files. These repositories are then combined with all of the GitHub repositories contain in The Pile. The dataset used to train GPT-CC is obtained from SEART GitHub Search using the following criteria: GPT-Code-Clippy (GPT-CC) is an open source version of GitHub Copilot, a language model - based on GPT-3, called GPT-Codex - that is fine-tuned on publicly available code from GitHub.

Please refer to our new GitHub Wiki which documents our efforts in detail in creating the open source version of GitHub Copilot

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed